The "How" of Data Custody for Web3

Apollo Week #4 - A more technical look at what the protocol is and does

In previous weeks, we've been focused on a more macro perspective - on the "Why" we need to build better for Web3. This week, I'd like to focus a little more on the "How".

Infrastructure and protocols are tough to succinctly-but-effectively discuss; it takes a lot to get someone interested in less than 5 minutes, let alone understand. We've been hard at work on an abbreviated deck to help that conversation along (which can be found here), and figured this newsletter can serve as a pseudo-appendix!

How do we enable users to maintain custody of their data while improving their digital experience?

This is the ultimate goal - to have our cake, and eat it too. Web2 has proven that it's not just enough to return end-users (both consumers and businesses) custody of their data. Web3 needs to fundamentally improve this experience. As boots-on-the-ground engineers, this lead question can further be broken down:

How do I manage access to the user's data without centralizing it?

How do I secure that data, at rest, in transit, and in use?

As decentralized applications become more sophisticated, and rely more heavily on data stored on networks like FileCoin, developers will have greater need for managing custody. Unlike Web2 and centralized hardware, access management for networked storage should persist at the transport layer. By defining how we approach access in a protocol, we can enable the interoperability Web3 deserves.

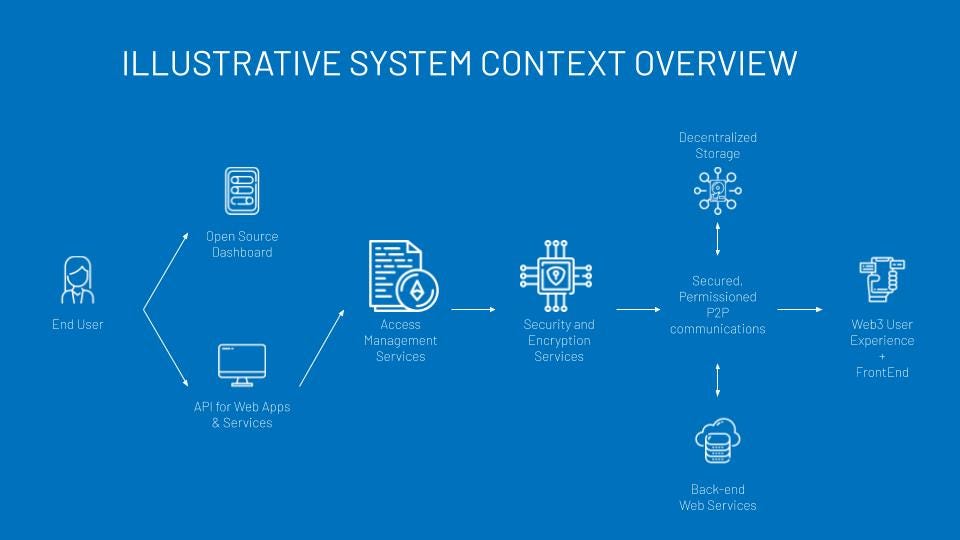

This protocol is underpinned by two core services, Access Management and Encryption, and driven by open-source, decentralized principles.

Access Management at the Transport Layer

With networked, decentralized storage, access needs to be managed in a networked, decentralized way. We believe the most effective way to do this today is through the blockchain, specifically, Ethereum/EVM.

The protocol is defined within a system of smart contracts, which allow it to serve as a bridge between end-user (whether a person or a service) and the decentralized storage network.

When data is committed to storage, the protocol creates a unique, autonomous token which then effectively "owns" the data, providing critical access management primitives, as well as some microservices core to the protocol execution.

Instance-based Access Control

In centralized systems, we more often than not see Role-based Access Control (RBAC) schemes, as they are easier to implement and manage at scale. RBAC limits interoperability severely, as roles are clearly defined and specified for particular applications, i.e. Admin, Editor, Commentor, Viewer.

In Web3 and decentralized storage, addressable content provides an organic segmentation of data; you are no longer managing a mountain of data across customers, users, and applications on a single hardware system. Rather, every file receives its own address and is stored across the network.

For this reason, Guer's protocol Access Management more closely resembles Instance-based Access Control. This is an access structure with data as the root, rather than roles, resulting in much greater control. It is, by definition, more granular than RBAC.

The protocol provides three management primitives - Read, Write, and Admin. Developers can use these primitives to build application-specific, adopting the same "legos" approach as other decentralized innovations. By adhering to the protocol primitives, complex access systems can simplified to also allow interoperability across the decentralized web, regardless of storage network or blockchain.

Core functionalities

The smart contract system, as the immutable source of protocol, is also unique in that it provides some basic functionality. These are intentionally limited, and are further restrained by the EVM, in order to provide stability, reliability, and consistency. The hybrid, novel nature of a smart contract-based protocol capable of basic utility allows us to also eliminate the need for certain 3rd parties, like Certificate Authorities, DNS Resolvers, and other IP-based technologies.

These functions include:

Identity and Access Verification Services: Using elliptical curve cryptography (ECC) keypairs, such as Ethereum's private/public key and address, allow us to verify identity using gasless, signed messages. Once a request's identity has been confirmed, the smart contract can reference its registries for which permissions, if any, that address is granted.

Transport Layer Security: If appropriate, the smart contract will initiate the encryption services. The contract facilitates two forms of security - a P2P handshake protocol for secured synchronous communication, and proxy re-encryption for asynchronous distribution of permissioned content.

Governance and Recovery: The protocol allows for social recovery and other advanced governance, if needed. In its simplest form, upon creation, the token will define a consensus mechanism for introducing a new admin address to recover access to the data. These terms can be customized further or removed altogether.

Proxy Payments: The protocol tokens also have basic wallet functions, allowing them to manage, utilize, and receive payment transactions. These are intended to simplify the facilitation of network fees associated with requests and encryption computations.

Security and Encryption for Web3

Access Management systems are only as useful as their ability for enforce the permissions they provide. This is particularly challenging for decentralized systems built on public, distributed ledgers like Ethereum. We began exploring Web3-native encryption as a result, in the hopes of devising a standard means of facilitating encryption for privacy and security in a truly trustless, decentralized manner.

Through our research and discussions with the community, we determined that Trusted Execution Environments (TEEs) such as Intel SGX or OpenEnclave were the missing piece to encryption on the decentralized web. TEEs allow us to perform cryptographic functions in a verifiably private environment, directly on the CPU of host hardware, without any additional hardware or dependencies. Layer-2 solutions such as SKALE require their validators to be TEE-compatible, effectively creating a network of TEEs available for private computation.

By pairing TEEs with the blockchain, we are able to provide at least two core encryption services for Web3: A Web3-native Alternative to TLS, and a decentralized Proxy Re-encryption Service. Ultimately, in order to enforce decentralized permissions policies, we needed to find a decentralized means of encryption for data in transit, at upload, and for retrieval.

Introduction to dSLS: Decentralized Session-Layer Security

We began with encryption in transit, as it's a bit more straight forward. At the conclusion of the dSLS protocol, both parties will have an AES128 key for use in symmetric encryption, just as you would with TLS, and can continue to use existing libraries like OpenSSL for communications.

Broadly speaking, the protocol works as follows:

A connection request is sent to the smart contract associated with that data or user

Smart contract verifies via signed message Digital ID and Permissions for Alice & Bob

If connection is permitted, the smart contract will package system context information along with a Session ID, and publish to a log to get picked up by a TEE

Within enclave, TEE creates a seed hash from system context, and adds randomness, to generate an AES128 key

TEE will then "wrap" two copies of the AES128 key. One copy with party Alice's public key, and one copy with Bob's public key.

Both encrypted copies of the key are then published back to the smart contract token

Both parties can retrieve wrapped AES128 via blockchain, and unwrap the AES128 key via their private key

With the AES128 key now usable, Alice & Bob can send encrypted messages using any Web2 secure communication method

Beyond Client-side Encryption: Decentralized Proxy Re-encryption

In order to better facilitate accessibility, mobility, and functionality, we need to find a decentralized, remote means of performing encryption. We're actively researching how we can use TEEs and smart contracts in a proxy re-encryption service, with the goal of enabling similar convenience and functionality as Web2.0. Specifically, to allow us to :

Encrypt data for storage without depending on client-side encryption

Distribute encrypted data with revocable keys

Not require active participation of content creator/uploader to distribute content

Similar to the dSLS protocol, the proxy re-encryption would utilize smart contracts to verify digital identities and permissions, as well as initiate the encryption process for the TEE. The current challenge is re-provisioning a random TEE for decryption; in a trustless, decentralized system, we want the TEEs to be stateless upon initialization. Once the TEE is provisioned for that particular set of data, it can

Import encrypted data from storage

Securely return it to a plaintext state within the TEE

Re-encrypt it with a revocable, temporary distribution key like AES128

Validate the re-encrypted content with Hash-based Message Authentication Codes (HMAC)

Encrypt and distribute (via blockchain) key and HMAC, along with new data address

Distribute session-encrypted data via preferred method (p2p, tcp/ip, cached network storage)

This type of functionality is being explored by other projects and solutions, such as centralized proxy re-encryption services, fully homomorphic encryption, or even independent networks of TEE-enabled machines. We believe the solution must be at the protocol level, reducing dependencies on centralization and less-distributed economics. By remaining agnostic, we can leverage any network of TEEs or services, while maintaining compatibility across applications.

The Importance of Open-Source and Integrations

To that end, as a protocol on public networks, we fully acknowledge the only way for this to work is through transparency, verifiability, and accessibility. We'll look deeper into this later, but for now want to be emphasize the fact that, as a protocol, there will be several methods of interacting with your data, whether directly in an application, through an open-source client, or directly the terminal.

Everything from the structure of the access management system to the attestable encryption code pushed the TEEs will be available for view, verification, validation, and development.

Apollo Update - Week #4

Alright, we're about a week late on this one, but were busy prepping for our mid-Apollo expo! We’ll get the Week #5 update out by the end of this week :)

Technical Update

We've got the beginnings of the demo worked out:

As you can see, we've been able to modify a FOSS Web2 application to utilize Ethereum and IPFS as a back-end. We believe it's critical for Web3 to easily migrate Web2 over to decentralized, and are excited that this is working!

Our protocol smart contracts are configured to manage access, and we have the some basic encryption in place!

Over the next two weeks, the demo will:

have a more robust access management structure in place, using the IBAC primitives as its root

demonstrate how an end-user can manage their data directly through an open-source client, in addition to natively in-app

share and revoke access with any ETH address

securely connect to Excalidraw-web3 from any device, using your Eth address instead of IP address for keygen and distribution

Project Update

We continue to refine our messaging and content to better communicate what we're up to. We made a "quick pitch" or intro deck (which you can check out here!) that really tries to distill both the macro and micro picture into a 5 minute conversation. If you have a minute, take a look and leave some comments!

Thanks for reading, and as always, we love to hear from you! Send us a note at guer.co or info@guer.co!